We don't search online, we "google", so it is no surprise that Google has over 77 per cent of the search engine market, while the nearest competitor is Baidu with 8 per cent.

Google-owned Gmail has 1.2 billion users, giving it 20 per cent of the global email market share. Then there's Facebook with over 2 billion monthly active users and the Facebook-owned WhatsApp messaging app, with 1.3 billion.

For search and social, Google and Facebook are inarguably two of the most powerful companies on the planet. On what seems like a weekly basis they hoover up smaller enterprises in fields like Artificial Intelligence (AI), machine learning, the Internet of Things (IoT), robotics and more. They have lots and lots of our data and clever ways of combining and analysing it to get to know us better. Worried?

The worry is not just about the volume of data that these companies collect when we use their services: it is about what they do with this data

This is what Dr Orla Lynskey, assistant professor with the Department of Law at the London School of Economics (LSE) calls "data power". Speaking at her alma mater, Trinity College Dublin, last week, Dr Lynskey gave a public lecture exploring the power of these tech giants, asking whether they should be reclassified as public utilities, much like water, natural gas, electricity and broadband internet services.

The worry is not just about the volume of data that these companies collect when we use their services - it is about what they do with this data, and how much knowledge and control individuals have (or don’t have) over this data, she explained. This is data power and the concern is how it is - or is not - being regulated.

Specific regulation

Current legislation that deals with these issues comes from a triad of data protection law, consumer protection, and competition law. There is a pressing need to evaluate whether these laws are enough to keep these tech giants in check or whether Google and Facebook or other data-rich companies warrant specific regulation, Lynskey articulated.

Let’s look to the recent hefty fine of €2.3 billion Google was slapped with by the European Commission in relation to search engine results. Google was judged to have “abused its dominant position by systematically favouring” its own price-comparison feature, Google Shopping, over other competitors. This now means that Google must, as the ruling states, “comply with the simple principle of giving equal treatment to rival comparison shopping services and its own service”.

Job done, you think. Existing legislation works. But it is not just about market dominance and antitrust rulings. “When we talk about data power we talk about the harm extending from this as more than just economic. There are societal implications,” said Lynskey.

She explained that “data power” is a new and multifaceted concept created to describe the influence of tech giants. It is economic clout mixed with the power to profile individuals, and the power to influence opinion-formation, including on policy issues. The latter is evidenced by Facebook’s difficulty with fake news during the US presidential election and Google’s autocomplete problem that favoured Holocaust denial search engine results after the search algorithm was gamed by extremist websites.

If 'don't be evil' once worked for Google as a quirky but sincere motto, it now seems passé

If “don’t be evil” once worked for Google as a quirky but sincere motto, it now seems passé. “Don’t be everywhere” seems more appropriate for these online information powerhouses that have, as Lynskey described, a “god’s eye view of individuals”. Lynskey pointed out research showing that while 40 per cent of the Irish don’t mind providing personal information in exchange for free online services, 46 per cent “totally disagree” with this.

But it is a free market, so people can ostensibly choose another search engine or social media platform - and if they don't agree with the terms of service, they don't have to. Frank Pasquale, author of The Black Box Society, a book on the extent to which algorithms control the economy and information economy, puts it in slightly starker terms: "What the search industry blandly calls 'competition' for users and 'consent' to data collection looks increasingly like monopoly and coercion."

"In terms of 'data power', we're only seeing the tip of the iceberg at this stage," says Brandt Dainow, researcher in computer ethics at Maynooth University and an independent data analyst. "The concern is around what is being called algorithmic justice, which is that these algorithms are making decisions about us and we have no way of knowing if they are fair. Most of the time we don't even know the decision has been made and if so, on what basis."

To illustrate this algorithmic (in)justice, Dainow gives an example (which Google has since addressed) of previous searches for the term “professional hair” that returned a majority of images of white people, while the term “unprofessional hair” mostly returned images of black people.

Societal biases

“These algorithms are merely taking existing societal biases and reflecting them back at us, but the danger is that most people assume these technologies are objective and accurate,” he explains.

It is one thing to have racist search results, but as data is bought, sold, merged, shared and fed into algorithms, there exist companies like facial recognition startup Cloud Walk. This company analyses how people walk and behave in order to predict their likelihood of committing a crime, warning police (or is that the PreCrime department?) when the risk becomes “extremely high” in order to intervene before a crime may take place.

Because these predictions are made by computers, the assumption is that they must be accurate or better than human judgment, and these systems will be (and are now being) run by private companies whose algorithmic processes lack transparency yet are controlling more and more aspects of society from smart cities to criminal justice, says Dainow.

“This scares the shit out of me. It will make 1984 look like a Teddy bear’s picnic compared to what can be done,” he says. “It will be subtle. It won’t be obvious. We are potentially staring down the barrel of a corporate dictatorship.”

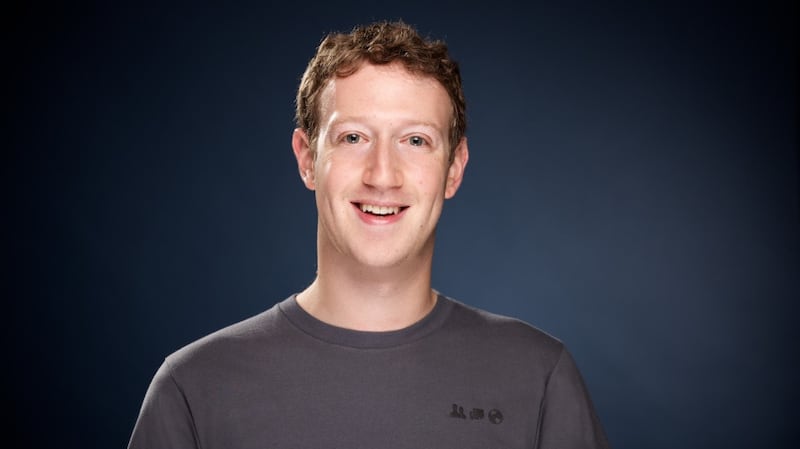

Would a new category of government regulation prevent this kind of Dystopian future, and is there even a possibility of getting these tech giants reclassified as public utilities or something similar? Funnily enough, Mark Zuckerberg used to like referring to Facebook as a “social utility”, but perhaps he realised the ramifications of the word “utility” because it has since disappeared from the official mission statement. With mammoth user bases and little or no competition, it could be argued that these kinds of online services are becoming essential utilities that people cannot live without and require regulated access to.

Lynskey, however, referred to the difficulties with this kind of regulation. The Collingridge dilemma, for example, articulates the trade-off between power and information where it is impossible to predict the eventual influence of a technology in the early stages, but once it has established itself it is then very difficult to control or change.

Stifling effect

Another dilemma exists: the possible stifling effect of regulation and how it could be either beneficial or detrimental to the individual.

"One of the main challenges is that you cannot, as a nation state, regulate the power of a corporation that operates globally. And from the point of view of these corporations, it seems that they see themselves as championing freedom of speech," explains Dr Eugenia Siapera, deputy director of the Institute for Future Media and Journalism (FUJO) and the chair of the MA Social Media Communications at Dublin City University.

“They see themselves as having a role to play. A European country might want to regulate to curb racism or misogyny, but a dictatorship might want to regulate in order to control freedom of speech. From the point of view of these various social media platforms, they see themselves at the forefront of protecting freedom of speech,” she adds.

On the other hand, Facebook has a blanket no-nudity clause, which has led to pictures of Denmark’s Little Mermaid and the Venus de Milo being classified as obscene and removed, while free speech can allow hate speech to trickle through.

“This is exactly where, in terms of regulation, a lot of the tensions emerge because these corporations operate within US law. If someone like Trump determines one day that, for example, anti-fascist protesters are illegal and a terrorist group, then Facebook has to implement this globally,” Siapera points out.

We cannot help but wonder to what extent those busy little algorithms have decided to alter what we see based on what they think is best

This idea of “data power” is, without a doubt, a complex issue woven from legal, economic, societal and ethical strands. It is a multifaceted problem that leaves us at times frustrated at the artificial choice between using platforms everyone else is on but giving up power and autonomy over our personal and private data or opting out only to opt out of essential, dare we say, utilities that it has become almost impossible to live without.

We like having free internet services and data is required to make them better - and we are reasonable enough to expect that private corporations want to make profits. But without adequate protections, transparency and autonomy (on a national and individual level), we cannot help but wonder how much we are being monitored and to what extent those busy little algorithms have decided to alter what we see based on what it thinks is best.

Isn’t “free” beginning to feel a lot like a ball and chain forged of bits and bytes?