When Kate’s 13-year-old son took up Minecraft and Fortnite, she did not worry. He played in a room where she could keep an eye on him.

But about six weeks later, Kate saw something appalling pop up on the screen – a video of bestiality involving a young boy. Horrified, she scrolled through her son’s account on Discord, a platform where gamers can chat while playing. The conversations were filled with graphic language and imagery of sexual acts posted by others, she said.

Her son broke into tears when she questioned him last month. “I think it’s a huge weight off them for somebody to step in and say, ‘actually this is child abuse, and you’re being abused, and you’re a victim here’,” said Kate, who asked not to be identified by her full name to protect her family’s privacy.

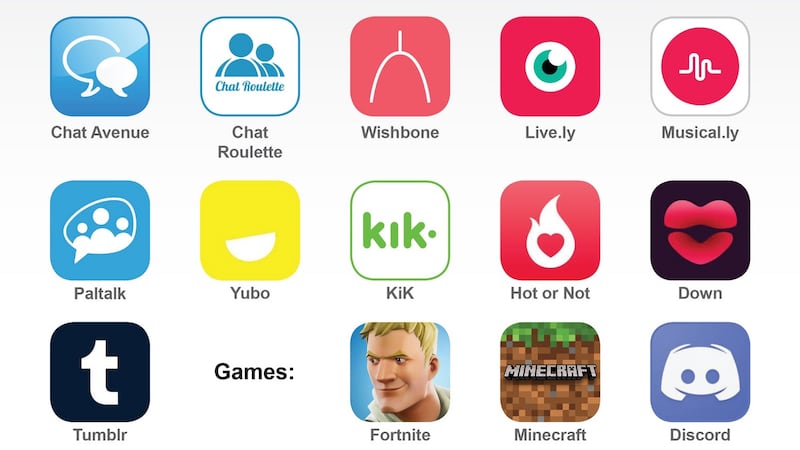

Sexual predators have found an easy access point into the lives of young people: They are meeting them online through multiplayer video games and chat apps, making virtual connections right in their victims’ homes.

Criminals strike up a conversation and gradually build trust. Often they pose as children. Their goal, typically, is to dupe children into sharing sexually explicit photos and videos of themselves – which they use as blackmail for more imagery, much of it increasingly graphic and violent.

The tech industry has made only tepid efforts to combat an explosion of child sexual abuse imagery on the internet. There are tools to detect previously identified abuse content, but scanning for new images – like those extorted in real time from young gamers – is more difficult. While a handful of products have detection systems in place, there is little incentive under the law to tackle the problem, as companies are largely not held responsible for illegal content posted on their websites.

In America, there has been some success in catching perpetrators of "sextortion". In May, a California man was sentenced to 14 years in prison for coercing an 11-year-old girl "into producing child pornography" after meeting her through the online game Clash of Clans. An Illinois man received a 15-year sentence in 2017 after threatening to rape two boys in Massachusetts – adding that he would kill one of them – whom he had met over Xbox Live.

"The first threat is, 'If you don't do it, I'm going to post on social media, and by the way, I've got a list of your family members, and I'm going to send it all to them,'" said Matt Wright, a special agent with the US department of homeland security. "If they don't send another picture, they'll say: 'Here's your address – I know where you live. I'm going to come kill your family.'"

The trauma can be overwhelming for the young victims.

There are many ways for gamers to meet online. They can use built-in chat features on consoles such as Xbox and services like Steam or connect on sites such as Discord and Twitch. The games have become extremely social, and developing relationships with strangers on them is normal.

In New Jersey, law enforcement officials from across the state took over a building near the Jersey Shore last year and started chatting under assumed identities as children. In less than a week, they arrested 24 people.

Authorities did it again, this time in Bergen County, a suburb close to New York City. They made 17 arrests. And they did it once more, in Somerset County, arresting 19. One defendant was sentenced to prison, while the other cases are still being prosecuted.

After the sting, officials hoped to uncover a pattern that could help in future investigations.

But they found none; those arrested came from all walks of life.

When announcing the arrests, authorities highlighted Fortnite, Minecraft and Roblox as platforms where offenders began conversations before moving to chat apps. Nearly all those arrested had made arrangements to meet in person.

In Tennessee, a girl attending high school thought she had made a new female friend on Kik Messenger. They chatted for six months. After the teenager shared a partially nude photo of herself, the "friend" became threatening and demanded that she record herself performing explicit acts. The girl told her mother, who called police. The photo was never shared publicly, but she said in an interview she was haunted by the experience years later. "I thought for a long time that there was something wrong with me or that I was a bad person," she said. "Now that I've gotten to college, I'll talk to my friends about it, and there have been so many girls who have said, 'that exact same thing happened to me'."

There are a few seemingly simple protections against online predators, but logistics, gaming culture and financial concerns present obstacles.

Companies could require identification and parental approvals to ensure games are played by people of the same age. But many gamers have resisted giving up anonymity.

Microsoft, which owns Xbox and the popular game Minecraft, said it planned to release free software early next year that could recognise some forms of grooming and sextortion.

Marc-Antoine Durand, chief operating officer of Yubo, a video chat app based in France that is popular among teenagers, said it watches for grooming behaviour with software from Two Hat Security, a Canadian firm. Yubo also disables accounts when it finds an age discrepancy, often requiring users to provide a government-issued ID. But users frequently object to providing documentation, and many children do not possess it.

Discord said it scanned shared images for known illegal material and that moderators reviewed chats considered a high risk. The company does not, however, automatically monitor conversations for grooming, suggesting it would be unreliable and a privacy violation.

Some of the biggest gaming companies provided few, if any, details about their practices. Epic Games, creator of Fortnite, which has roughly 250 million users, did not respond to multiple messages seeking comment.

Sony, the maker of PlayStation, which had nearly 100 million monthly active users earlier this year, pointed to its tutorials on parental controls and tools that let users report abusive behaviour.

The solution many game developers and online safety experts return to is that parents need to know what their children are playing and that children need to know what tools are available to them. Sometimes that means blocking users and shutting off chat functions, and sometimes it means monitoring the games as they are being played. – The New York Times