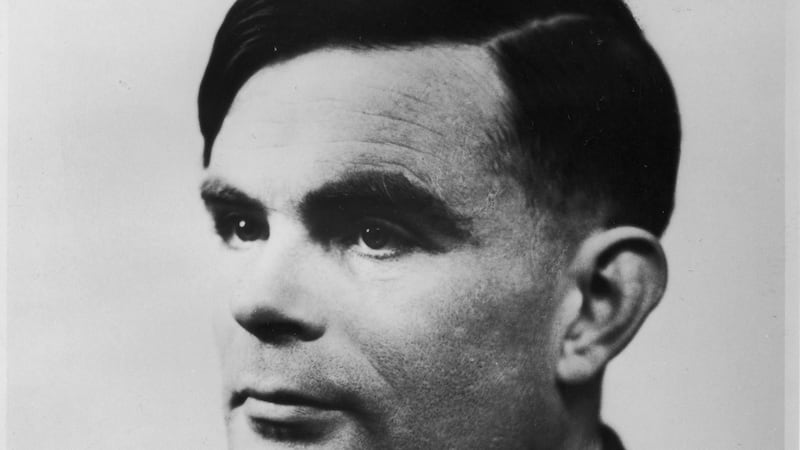

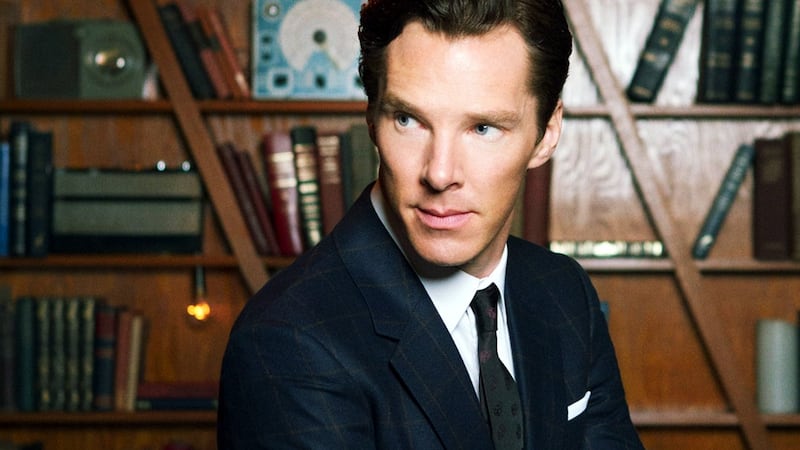

Alan Turing became a household name after 2014's film The Imitation Game which detailed the crucial role that the British mathematician played in decoding the German Enigma cipher during the second World War, which brought the conflict to a quicker end and saved countless lives.

The research that Turing went on to do after the war also has a profound legacy, the fields of computer science and artificial intelligence both stem from the work of the logician. The concept of machine intelligence was the focus of much of Turing’s work through the late 1940s, and in 1950 he proposed a way to measure this.

The “Turing Test” attempted to see if a computer can exhibit behaviour indistinguishable from a human. This was done by having one person chat via a computer terminal where they would sometimes communicate with another human, and sometimes communicate with a computer – could the computer convincingly imitate a human?

"When Turing first set out the notion of artificial intelligence it gave this very generalised notion of AI, where it was testing against intelligence as exemplified by the human brain. The task was for the computer to think and be creative like a person," says Kieran McCorry, National Technical Officer with Microsoft Ireland.

Fascinating question

The question of whether computers could think sparked a lot of academic interest in the 1950s. “It was a fascinating question,” explains McCorry, “because it made a leap from mathematics to philosophy”. As computing technology was limited, the conversation stayed mainly theoretical and while this period provided the foundation for computer science, talk about AI soon became quiet. “The late 1950s to the early 1980s are a doldrum period in AI,” says McCorry.

In the 1980s, the topic of AI resurfaced alongside advances in robotics, but it was not until the late 1990s that the public consciousness around AI was captured again, when IBM's supercomputer Deep Blue beat world chess champion Garry Kasparov.

“This was pitted as a fantastic challenge between man and machine. Conflict between man and machine is a background narrative that always runs through AI – people want to know will machines beat us, or overtake us?” says McCorry.

“Machines beating people was nothing new, a steam engine can beat a person in the ability to go fast and that happened quite some time before. But Deep Blue was the first time a machine beat someone in an endeavour that is very cerebral and intelligence-based.”

Deep Blue represented the high-water mark for one way of thinking about artificial intelligence. As technology continued to advance and AI began to be more practically applied then the broad, philosophical question of whether a machine could effectively replicate a human lost sway and an alternative view began to emerge.

“Kasparov says that the best chess player is not a person, or a machine, but a combination of the two,” says McCorry, “and that is certainly Microsoft’s view of AI. It is about using technology to augment human capability”.

Through the late 1990s and 2000s, artificial intelligence became forged into many people’s day-to-day lives through technologies like voice recognition and driver-assisted cars, which presented an updated vision of how AI could fit into society.

“A very relevant definition for AI today, which I think links back to where Turing was going all those years ago,” says McCorry, “is to look at a machine’s ability to perceive, to process the perceived information and identify patterns, and then to perform some kind of reasoning based on those patterns”.

“Most recently, there have been three factors that have come together that act as an inflection point for AI,” says McCorry. The first of these is the sheer amount of data, “we have vast quantities of data that has never been available before. There are 11 billion internet-connected devices and less than 8 billion people, all the devices are generating huge streams of data.”

Ostensible interactions

The second factor is the ability to store this data in the cloud, and the computing power to process it. The final factor McCorry suggests is the development of improvements in algorithms: “these have allowed us to do so much more with data”.

While we use AI in one form or another on a daily basis, the ostensible interactions we perform using Cortana or Siri, or through the GPS in our car is just the surface level, according to Gerard Doyle, network manager for Technology Ireland ICT Skillnet. “By far the biggest impact of AI – and the least obvious one for most people – is in data science,” he says. “In the era of ‘big data’ where we collect vast amounts of information that are virtually impossible for humans to process or analyse AI has truly come into its own.”

“Through ‘machine learning’ – a core technology in AI – a software program trains itself, by adjusting its algorithm, to analyse and learn from a vast array of data and then apply what it has learned to perform human tasks.”

It is in this realm of data analysis that AI has a vital role to play, and where Ireland is beginning to show its capability to lead through AI research coming from universities and research institutes connected to some of the large multinational technology companies based here.

Whether a computer can effectively imitate a human has become a moot point. “Many AI systems are displaying intelligence at a scale that is far beyond that of a human,” says Doyle, “it may be that in the future we will conclude that Turing picked the wrong benchmark – the human – and trying to prove that a machine can answer questions like a human may become an historical irrelevance”.