In an ill-informed approach by politicians to regulate internet abuse – via vague proposals to make company directors criminally liable for online hate speech and, even worse, to banish platform user anonymity – Ireland is careening towards regulatory nuclear options that are more likely to increase, rather than limit, online harms.

The proposed Bills are a misguided transpolitical attempt to get populist initiatives advanced in legislation that would be unworkable and, far worse, establish a model for stripping away important protections for human rights defenders, activists and vulnerable individuals globally. All, ironically, in the name of tackling online harm.

The first part of this problematical legal pairing is the Government's intent to make directors of social media platforms criminally liable for online hate that they fail to remove from platforms. Senators Malcolm Byrne and Shane Cassells are pushing for an amendment to the draft Bill for online safety and media commission legislation. The proposals have been welcomed by Minister for Media Catherine Martin.

This appalling Bill has now passed its first stage in the Dáil

The second is the Sinn Féin-proposed Bill to make platforms "accountable for defamation if they failed to or refused to divulge the identity of an account holder who made defamatory statements on their platform".

This appalling Bill has now passed its first stage in the Dáil. You’d be hard pressed to find a single human rights organisation anywhere in the world that would view it with anything but horror, especially because Ireland is the Europe, Middle East and Africa headquarters for most of the platforms.

Some of the most oppressive governments in the world fall into those markets. For more than two decades I've talked to human rights organisations and incredibly courageous individual human rights defenders about the challenges of online security and hate campaigns. Unfailingly, all speak to the critical importance of online anonymity, which protects them, their families and their contacts. Anonymity for them can be a life or death matter.

To demand that platforms know the identity of every single user and compel platforms to divulge that information at state or law enforcement request is . . . insane. Sinn Féin should know better than to make a shallow attempt to gain votes here while utterly ignoring the most vulnerable and exposed people of the world. So much for the cries of global political “solidarity”.

Elizabeth Farries, assistant professor with the UCD Centre for Digital Policy, says: "There are examples right now, every day, of the consequences of the loss of online anonymity. It's important to remember that online anonymity protects marginalised groups."

She notes that the United Nations states that removing online anonymity removes important protections, and points to the recent example of Russian protesters being identified via online accounts, arrested and imprisoned.

As for going after company directors, this is basically a proposal to have Ireland – Ireland! – regulate online speech internationally, easily one of the most difficult and thorny online problems in the world. It has been argued that somehow such a law would “only” be for Ireland, but we already have pan-EU responsibility for these companies as principle regulator in the closely related areas of data protection and privacy.

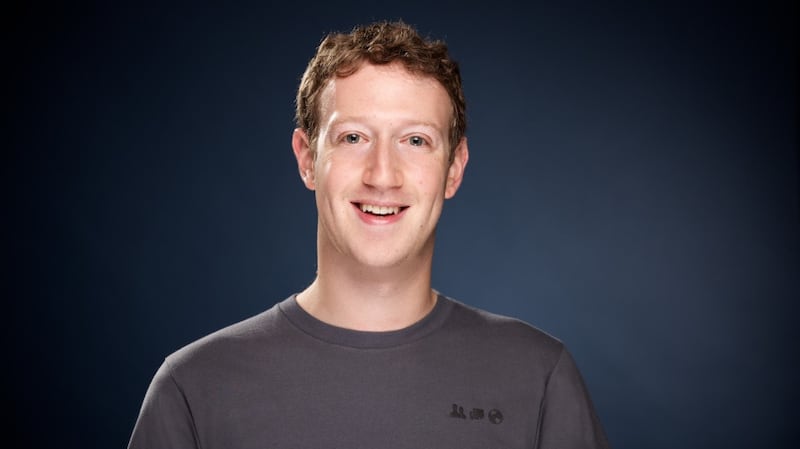

It’s not even clear which “directors” would be liable. Mark Zuckerberg? Fat chance. Irish directors then. But existing US and EU legal frameworks relieve platforms of responsibility for what users post.

Making directors criminally responsible in the more specific case of failing to remove content deemed harmful is possible, yes, but Farries says questions arise as to what this would look like in practice (is this about individual posters, or the effectiveness of moderating algorithms?), whether it would work and what might be the unintended consequences.

Simon McGarr, director of Data Compliance Europe and a solicitor with McGarr Solicitors, the law firm for rights advocates Digital Rights Ireland, notes: "The proposal to create a new criminal offence would require the online safety commission to be resourced for prosecutorial as well as regulatory purposes."

We can already see how well that’s worked out for the Data Protection Commissioner’s Office, which has struggled to squeeze adequate funding out of politicians for two decades.

Such a provision is unlikely to create many problems for those tech behemoths

McGarr says the online safety Bill, as a whole, “has been misconceived from the start. It goes beyond what is required by European law and will require staggering resourcing to be effective. The proposal to hold directors of social media companies personally liable in the event of non-compliance with the commission’s decisions layers further costs on to the Bill.”

And, he adds, such a provision is unlikely to create many problems for those tech behemoths. Instead it “is most likely to affect innovative new entrants and competitors, rather than well-resourced, established companies with large compliance resources already in place”.

Both Farries and McGarr note, also, that fresh European legislation is pending across the areas of regulating online platforms, digital markets and services, online harms, and artificial intelligence. And they add that Ireland already has effective laws that enable the Garda to work with platforms to identify lawbreakers – though they are woefully underutilised.

We should dump the online safety Bill and wait for EU legislation that we will have the flexibility to transpose at country level.

And we should not even go near abandoning online anonymity. Or we will open the door to oppressive countries everywhere citing our dangerous example – a politically neutral, supposedly pro-human rights European democracy policing international online speech and forcing companies to expose the identity of platform users.